I am trying to using quantum-espresso(QE), but I can not configure it right.

A template for QE looks like the following

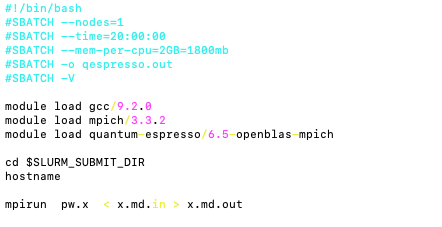

The one I modified looks like the following, but it gives errors

Anyone can help?

Thank you

I am trying to using quantum-espresso(QE), but I can not configure it right.

A template for QE looks like the following

Anyone can help?

Thank you

It’s running now.

Thanks

How about:

#!/bin/bash

#SBATCH --job-name=qe

#SBATCH --ntasks=12

#SBATCH --cpus-per-task=1

#SBATCH --time=24:00:00

#SBATCH --mem-per-cpu=3GB

#SBATCH --mail-user=username@usc.edu

#SBATCH --mail-type=all

#SBATCH --nodes=1

#SBATCH --account=<account_id>

#SBATCH --partition=main

module purge

module load gcc/9.2.0

module load mpich/3.3.2

module load quantum-espresso/6.5-openblas-hdf5

module load fftw/3.3.8-mpich

module load openblas/0.3.7

module load netlib-scalapack/2.1.0-openblas-mpich

module load hdf5/1.10.6-mpich

cd ${SLURM_SUBMIT_DIR}

mpirun --bind-to core -np 12 pw.x -i x.md.in > x.md.outThank you, Molguin. Your slurm file works faster than the one above.

Depending on your system size, increasing the number of processors to run in parallel will speed up the time to solution.

The SBATCH slurm directives used to increase the number of parallel workers are:

#SBATCH --ntasks=value

#SBATCH --nodes=value

The quantum espresso code is a well MPI-parallelized code and exhibits excellent scaling, so I would recommend the following slurm directives:

#!/bin/bash

#SBATCH --job-name=qe

#SBATCH --ntasks=48

#SBATCH --cpus-per-task=1

#SBATCH --time=48:00:00

#SBATCH --mem=0 # use all of the available shared memory

#SBATCH --mail-user=username@usc.edu

#SBATCH --mail-type=all

#SBATCH --nodes=2

#SBATCH --account=<account_id>

#SBATCH --partition=main

#SBATCH --constraint=xeon-4116 # 24 cpus per node

#SBATCH --exclusive

module purge

module load gcc/9.2.0

module load mpich/3.3.2

module load quantum-espresso/6.5-openblas-hdf5

module load fftw/3.3.8-mpich

module load openblas/0.3.7

module load netlib-scalapack/2.1.0-openblas-mpich

module load hdf5/1.10.6-mpich

cd ${SLURM_SUBMIT_DIR}

mpirun --bind-to core -np 48 pw.x -i x.md.in > x.md.out

For 3 nodes, change the following:

#SBATCH --ntasks=72

#SBATCH --nodes=3

mpirun --bind-to core -np 72 pw.x -i x.md.in > x.md.out

For 4 nodes, change the following:

#SBATCH --ntasks=96

#SBATCH --nodes=4

mpirun --bind-to core -np 96 pw.x -i x.md.in > x.md.out

And so on.

Thank you, Molguin. That’s a really good explanation.

Hi Molguin,

There is something that seems missing in QE module. When I was trying to use pw_export.x, it gives the error.

For this missing file, I find a possible solution here: https://www.mail-archive.com/users@lists.quantum-espresso.org/msg38364.html

Is it possible to do it on our system?

Thank you